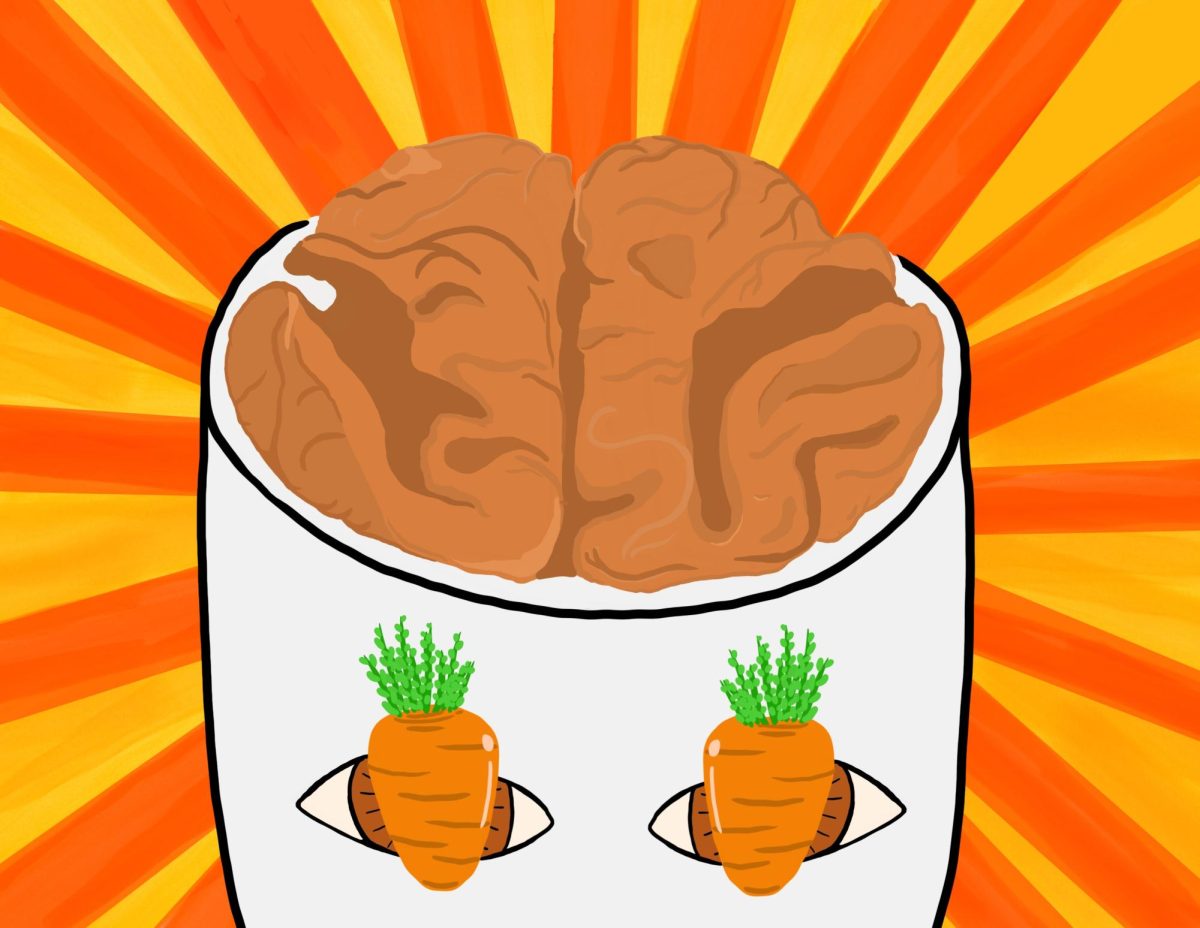

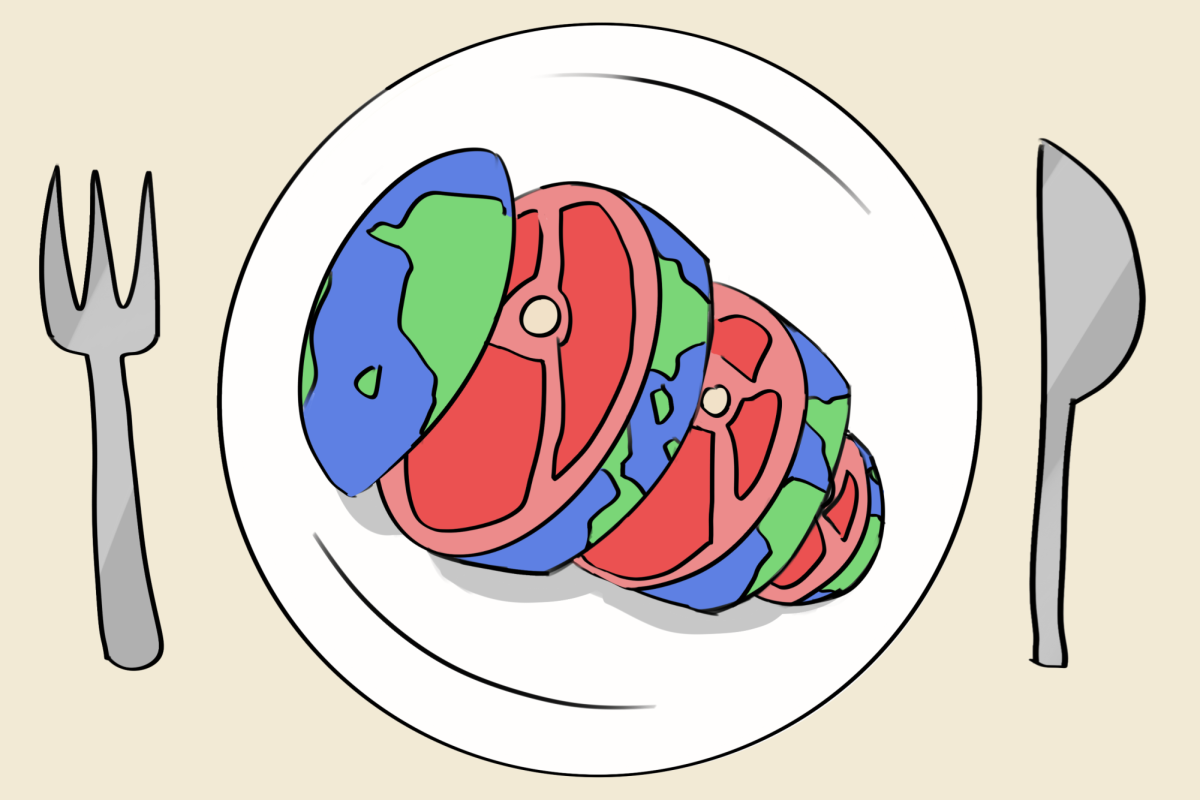

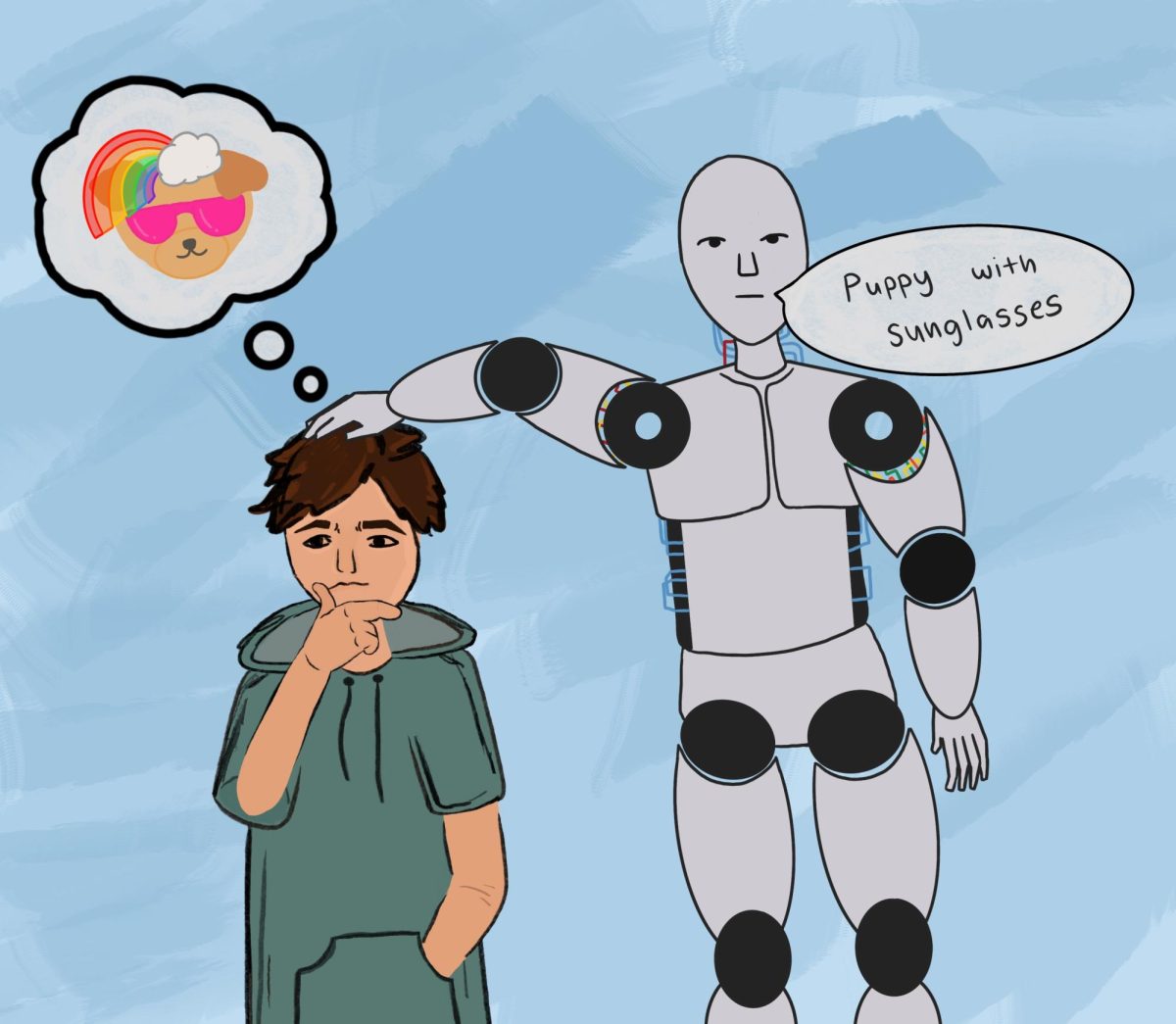

For decades, mind reading has only existed in science fiction and magic tricks. However, recent advances in artificial intelligence (AI) and neuroscience are bringing researchers closer to making this seemingly impossible task a reality. Scientists have now developed AI systems capable of decoding brain signals and displaying a person’s inner dialogues in the form of text.

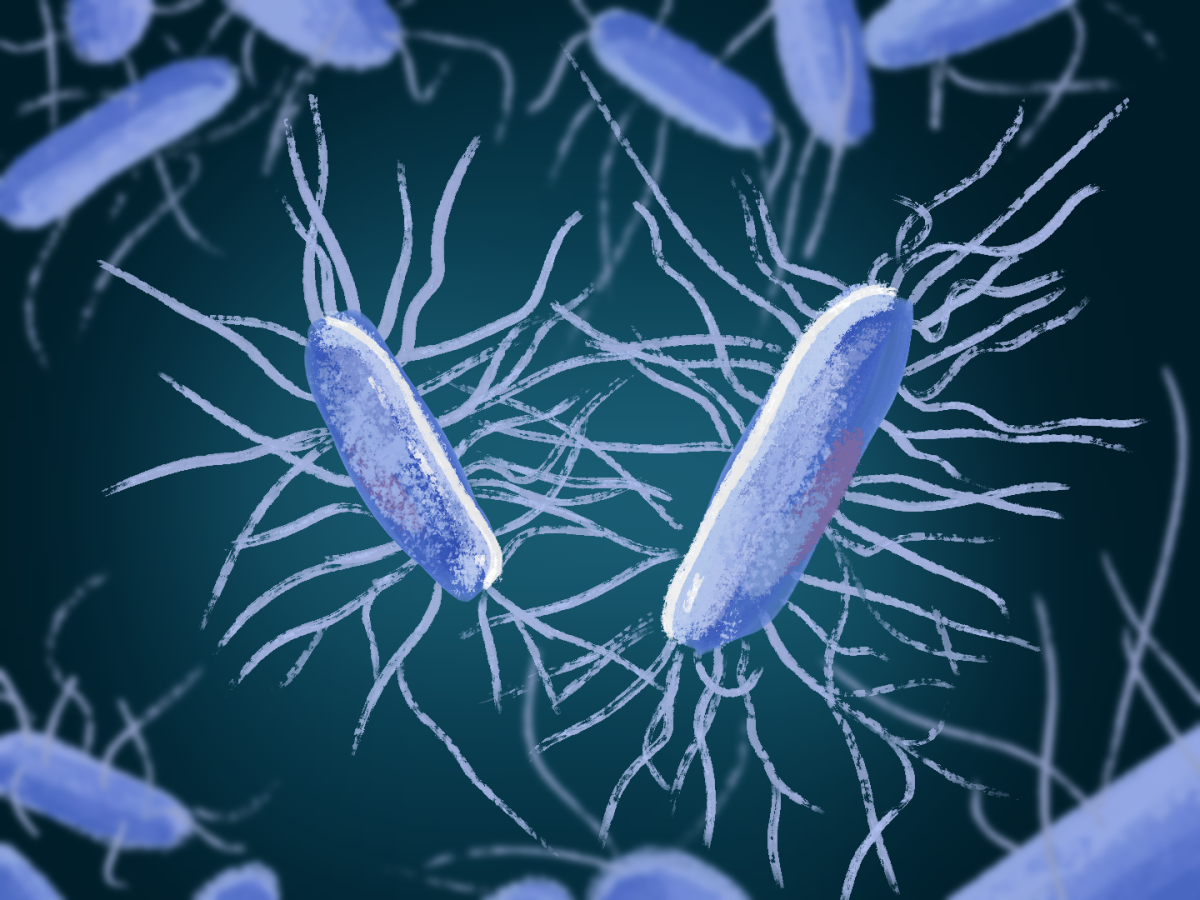

Neuroscientists at the University of Texas at Austin, led by researcher Alexander Huth, have recently introduced what they called a “semantic decoder,” an AI model that can convert patterns of brain activity into sentences. As reported by Nature Neuroscience, the system analyzes signals from functional magnetic resonance imaging (fMRI), a brain imaging technique that measures changes in blood flow in the brain to determine which regions are active during certain tasks.

To train the model, participants spent hours listening to stories inside an fMRI scanner while the AI learned how different patterns of brain activity corresponded to the meanings of words and sentences. Once trained, the system could analyze new brain scans from the participants and generate text that reflected the general meaning of hearing or imagining.

The results were striking. In one experiment described by Nature Neuroscience, when a participant listened to a sentence about someone walking into a house, the AI-generated text described the similar idea of someone entering a building. Although the wording was not always exact, the model successfully captured the overall meaning of the participant’s thoughts.

Unlike earlier brain-computer interface technologies, this system does not require surgery or implanted devices. Instead, it relies on noninvasive brain imaging and large language models, the same type of AI used in modern chatbots. According to Huth’s research team, this combination allows the system to interpret complex patterns in brain activity that correspond to semantic meaning rather than individual words.

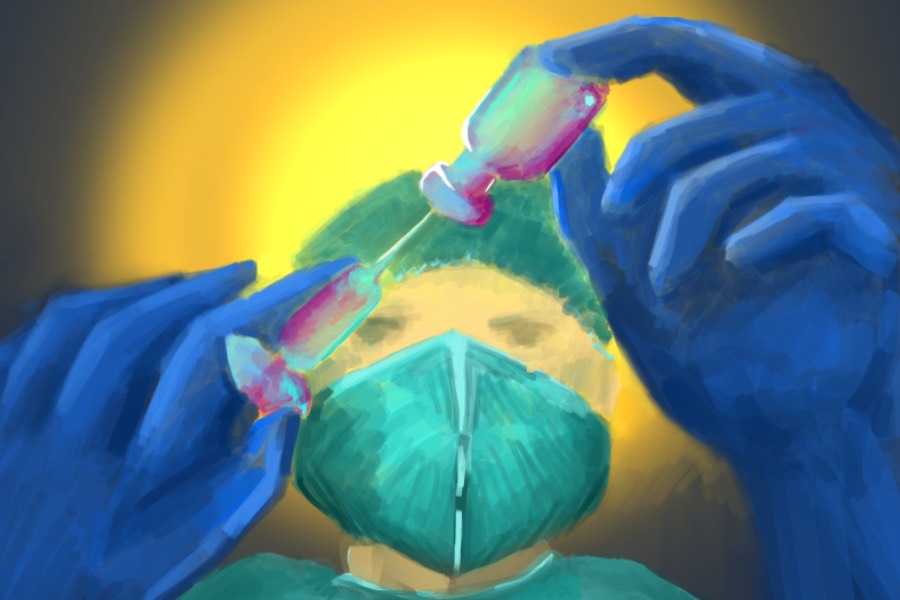

One potential application of this technology is helping people who cannot speak. Patients with conditions such as paralysis or certain neurological disorders often retain the ability to think and understand language but lose the physical ability to communicate. AI systems that translate brain signals into text could eventually provide a new communication pathway for these individuals.

However, the technology is still in its early stages. The current system requires participants to remain inside an fMRI scanner, which is expensive and not easily accessible in everyday environments. Additionally, the decoder only works accurately after being trained extensively on a specific individual’s brain activity. According to researchers from the University of Texas, the model cannot currently read the thoughts of someone who has not willingly participated in the training process.

The emergence of this new potentially invasive technology also brings ethical discussions. As AI becomes increasingly capable of interpreting brain signals, scientists and ethicists are considering the importance of protecting mental privacy. In interviews with MIT Technology Review, Huth emphasized that the technology should only be used with full consent and safeguards to prevent misuse.

Despite these concerns, researchers believe the work represents a major step forward in understanding how the brain encodes language and meaning. By combining neuroscience with powerful machine learning systems, scientists are beginning to uncover patterns that were previously impossible to detect.

While true mind reading remains far from reality, these advances suggest that decoding the language of the brain may one day transform communication, medicine, and our understanding of human thought itself.