The human brain is one of the most complex and intriguing aspects of nature. Humans’ ability to process new information, recognize patterns, and learn from our mistakes is unrivaled by other animals and machines. However, researchers are trying to challenge that.

In the last half century, computing has been largely defined by complex digital algorithms, but artificial intelligence remains limited by 64-bit processors, which limit memory space. Try as they might, computers are simply not cut out for the same tasks that an organic brain can perform. They are far less energy efficient, and given the massive amounts of data that need to be stored, their memories are often inadequate.

That’s where neuromorphic computers can help. Neuromorphic computers, or computers modeled around an organic brain, are much better equipped to handle tasks like in-memory and parallel processing. They are different not only in the way that they perform computations but also in the fundamental properties of their hardware itself.

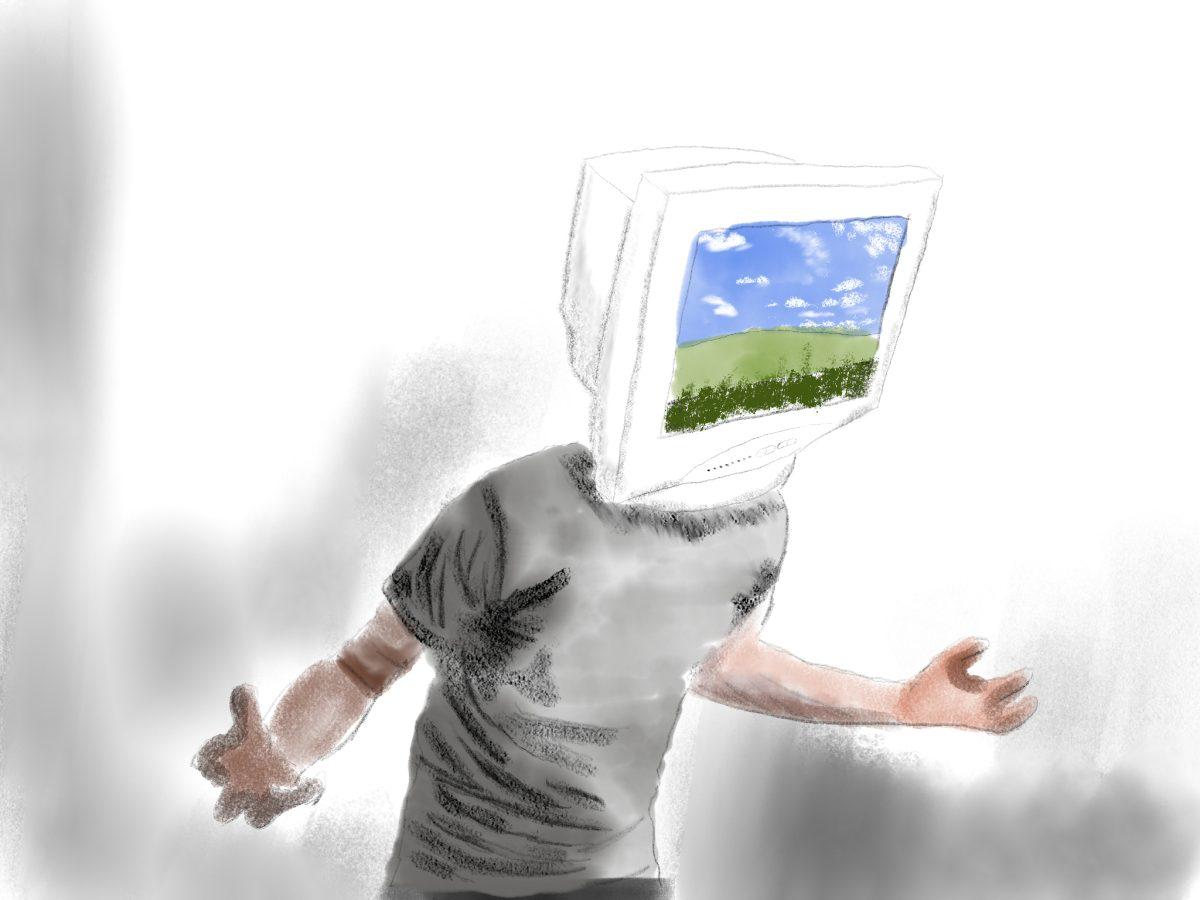

Modern digital computers vary in speed and power, but they almost always operate under the laws of electricity and the physics of semiconductors. Neuromorphic ones, however, model nature—they are made of artificial synapses and neurons. As a result, these new artificial minds are capable of activities a normal human brain could perform: pattern recognition, image and audio processing, and even creative output are all possible, thanks to data stored in an analog fashion, rather than in bits.

These two styles of computing could be used to compliment one another in systems with both neuromorphic and traditional computers. This collaborative new technology would allow artificial intelligence to disconnect from the cloud, process images in real time, or drive the first fully autonomous vehicles. They could even help solve humanity’s greatest problems with intellect equal to or greater than mankind’s.

To create a truly neuromorphic machine, however, there is a formidable set of obstacles that scientists must overcome first. While some pseudo-neuromorphic computers have been built and tested, they are relatively primitive by human standards. For instance, one of the most sophisticated chips ever developed, IBM’s TrueNorth chip, has over 268 million artificial “synapses” and consumes ten thousand times less energy than a traditional chip of the same size. While this sounds impressive, it is worth noting that the human brain, in comparison, contains over one quadrillion synapses.

Furthermore, the computer’s “brain” would need to meet a set of criteria to determine whether it was truly neuromorphic. Although this criteria varies wildly from expert to expert, common factors almost always include decision making, learning, planning for the future, and the ability to communicate using a common language.

Beyond these mechanical requirements, there are also less objective conditions. Some experts argue that the machine should be able to pass the infamous Turing test, in which the computer accurately passes itself off as human by demonstrating the capacity for subjective experiences and consciousness—a nebulous area.

As neuromorphic machines advance, it is apparent that the frontiers of computing will evolve past binary code and silicon chips alone. The minds of the future are being created today, and they may be more familiar than we expect.