by Ayush Raj (’23) | November 16, 2020

In March 2019, the CEO of a UK-based energy company received a call from his German parent company’s leader with an order to transfer €220,000 to a Hungarian supplier. A follow-up email matched the phone call instructions, so the CEO promptly transferred the money. However, once the transfer was completed, the money disappeared; it went from Hungary to Mexico and other parts of the world. The UK company’s CEO was tricked into sending the money by a clever fraudster equipped with artificial intelligence (AI) who used “deepfake” software to sound just like the CEO’s boss. The software was so intelligent that it was able to imitate not just the boss’s voice but his tonality, punctuation, and German accent as well.

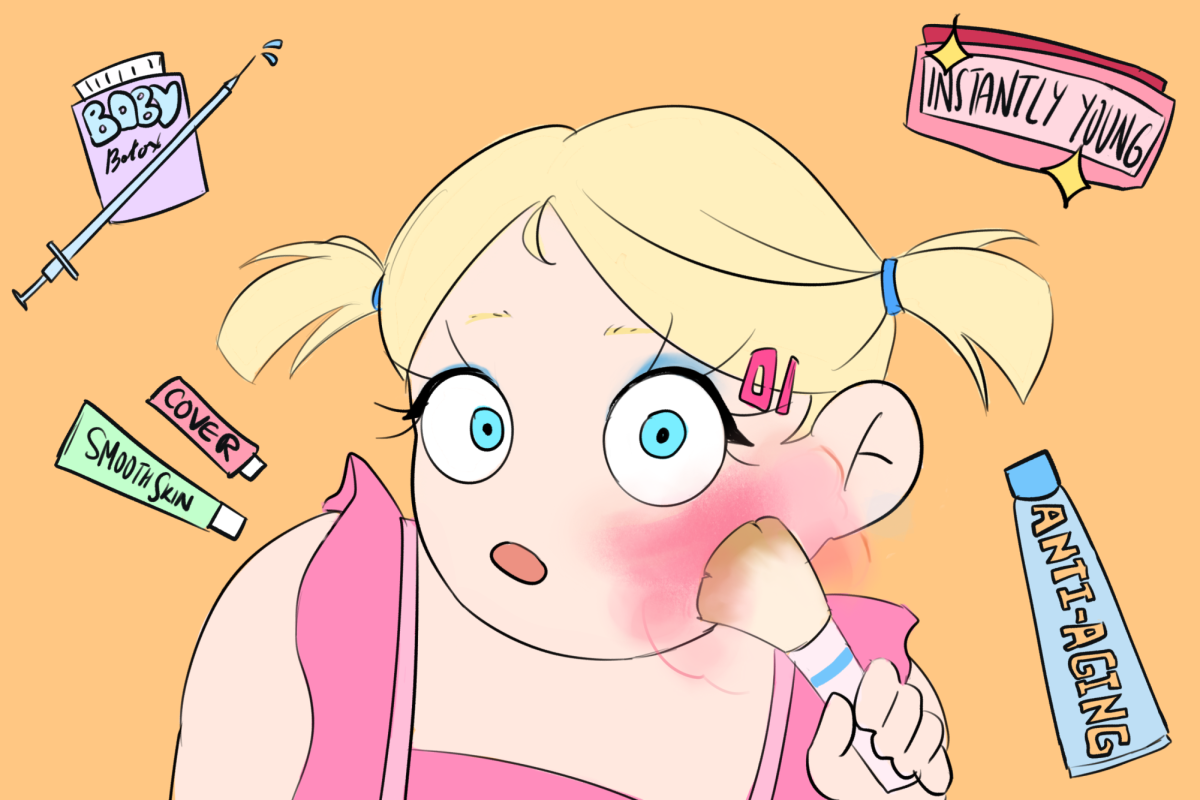

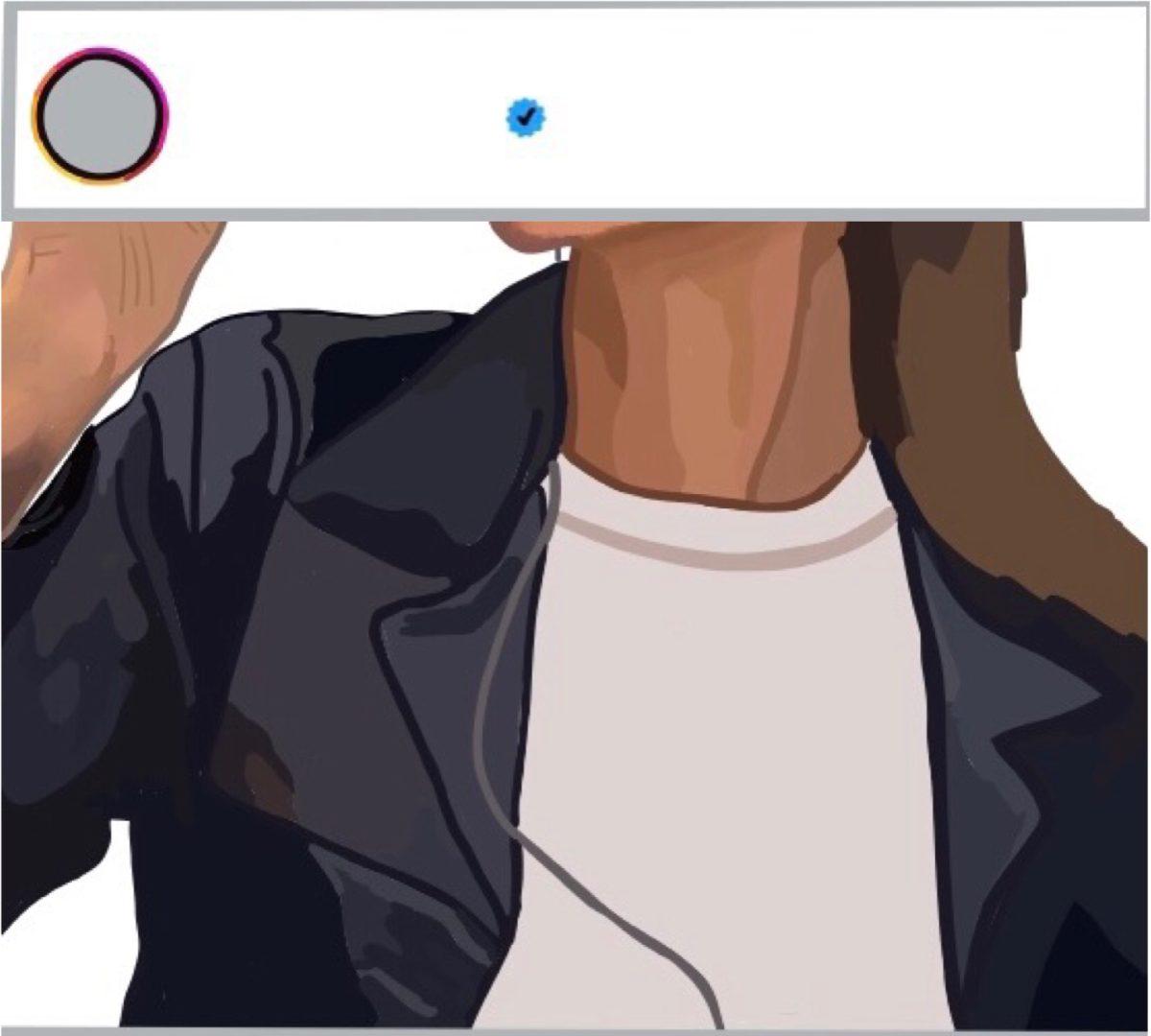

According to Merriam-Webster, a deepfake is an image or recording that has been convincingly manipulated to misrepresent someone as doing or saying something that was not actually done or said. It uses deep learning, a subfield of AI, to doctor videos and photos that challenge our assumptions of what is real and what is not.

The ability to create deepfake videos has existed for decades. However, it used to take a studio full of experts who spent months together to create similar effects. Now, with the help of AI, it can even take just a few hours, depending on the deepfake conversion’s complexity.

Deepfakes use deep neural networks, an AI algorithm that excels at finding patterns in datasets. The structure consists of “autoencoders,” with an encoder that compresses the image and a decoder that decompresses the image. A well-trained autoencoder also performs other tasks, such as generating new images or removing noise from grainy images. When trained on a few hundred images of a face, an autoencoder learns the various features unique to that face, such as finer details of the eyes and the shapes of the nose, mouth, and eyebrows. Deepfake applications use two autoencoders: one is trained on the face of the original person, and the other is trained on the face of the target. The application swaps the inputs and outputs of the two autoencoders to transfer the facial movements of the original person to the target person.

Since people began using AI to detect deepfakes, the popularity of deepfake has exploded in the last couple of years. This summer, Professor Maneesh Agrawala, the director of Stanford’s Brown Institute for Media Innovation, and his colleagues at UC Berkeley unveiled technology that detects lip-sync deepfakes by identifying minute mismatches in the sounds people make when they talk. However, Agrawala warns that deepfake-creating AI is also getting smarter. The real task, he says, is to increase awareness in our society and to hold people accountable if they deliberately produce and spread misinformation.

While it is true that deepfake raises many ethical issues, not everything about the technology is doom and gloom. Soccer superstar David Beckham recorded a malaria awareness video in only one language, but it was made available in nine languages using deepfake technology. AI-assisted synthetic media devices are also helping to create personalized advertising, personal assistive navigation apps, and unique vocal personas to help people with speech disabilities. Seeing is no longer believing: deepfakes are here to stay. If there is enough regulation to evade its malicious consequences, deepfakes can augment human progress.